Artificial intelligence has long since become part of everyday life. At the same time, the promised productivity boost is still keeping us waiting. So are the high hopes for an AI-driven economic boom unfounded? That would be premature, because massive technological upheavals all have one thing in common: technology develops considerably faster than our ability to absorb it.

The Promise of Electricity: Delivered Late

Technological revolutions take surprisingly long.

In the 1880s, engineers outdid one another with visions of the transformations waiting just over the horizon — many of them quite accurate.

Thomas Edison correctly predicted that soon even the poorest households would be lit by electric light, that household appliances "from the coffee grinder to the sewing machine" would soon work at the push of a button thanks to electric motors, and above all: that the steam engine in factories would soon become obsolete.

Even though Edison and others would ultimately be proven right, the announced economic and societal transformation took considerably longer to materialize than expected.

Forty years after the first light bulbs hit the market and the first power plant went into operation, only about half of all American households and factories had been electrified — in almost all other countries, it took even longer.

Electricity was, in fact, a revolutionary general-purpose technology.

The refrigerator, the washing machine, and the vacuum cleaner ultimately freed women from oppressive housework.

Factories that used steam engines had to continuously burn coal to power systems of belts and gears. The switch to electricity meant a safer and cleaner daily life for factory workers.

But above all, electricity made new consumer goods so cheap that even the electric sewing machine Edison had predicted became obsolete in most households — sewing or repairing your own clothes simply isn't worth it anymore.

Given the obvious potential of electricity to set off a second industrial revolution, the main question is: why did it take so long? How can it be that such an obviously extremely useful technology simultaneously needs this long to catch on?

The same question was asked 100 years later about another general-purpose technology: the PC.

Intel brought the silicon microprocessor to market in 1971. Soon, computers could be built that no longer filled entire rooms but fit on an office desk.

In the following decades, the technology continued to spread, but even in 1990 only 10 % of all companies worldwide were using computers. And above all: productivity gains were nowhere to be felt.

This revolutionary technology was supposed to massively drive economic development, just as electricity had before — but in reality, none of that was visible.

Nobel laureate Robert Solow remarked on this in 1987: "We see the computer age everywhere — except in the productivity statistics." In other words: the technology was everywhere, but the economic boom was nowhere to be seen.

History Doesn't Repeat Itself, but It Rhymes

Since history famously doesn't necessarily repeat itself but often rhymes, a similar picture is emerging today: instead of energy or information, it is now intelligence that is being made available extremely cheaply.

The capabilities of even outdated language models like GPT-3.5 were, just 10 years ago, distant science fiction for most people. Anthropic CEO Dario Amodei describes AI as "a country of geniuses in a data center."

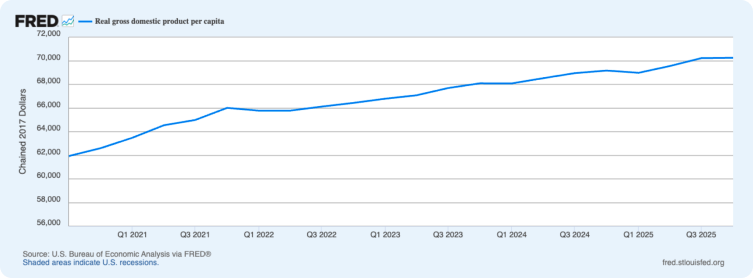

At the same time, the hoped-for economic boom has so far completely failed to materialize. Even the most sluggish organizations are now engaging with AI, and in the daily working lives of many employees it is a staple tool. But Germany has been economically stagnant for years, and looking at the US there is no AI boom to be seen either.

Interestingly, this development actually matches the predictions of economists who study the impact of new technologies on the economy and productivity:

"Economists assume that AI will significantly surpass the capabilities of today's systems by 2030; however, they predict that key indicators — including GDP and total factor productivity — will continue to track close to historical trends."

In other words: once again, a revolutionary technology is spreading, and once again the boom is - for now - failing to appear.

The good news: a look at the past shows why technology often takes far longer than expected to unfold its full effect — and what we can do to accelerate and steer it.

To appreciate the benefits of electricity in factories, it helps to picture what steam-powered industrial production looked like.

Factories of that era were overwhelming behemoths.

All the energy was generated by a large steam engine fired with coal. A huge drive shaft ran across all floors that powered every device in the factory via belts and gears. Practically, this meant all or nothing: if even a single device in the factory needed to run, the coal fire had to burn which turned on the entire machine.

In order to use each device flexibly with electricity — whenever and wherever it was needed — factories had to be completely rebuilt and replanned. Work processes that had been ingrained for decades no longer functioned. Workers needed entirely new skills because they had to perform entirely different work.

In other words: to actually realize the potential of electricity, enormous amounts of capital had to be invested, industrial production had to be reorganized from the ground up, and people had to be completely retrained.

It almost certainly won't take 40 years before artificial intelligence makes itself felt as a productivity driver.

At the same time, we shouldn't be surprised when revolutionary technologies take a surprisingly long time to unfold their effects. We should rather look closely at what we can do to accelerate and steer that unfolding.

The Early Bird Stays Smart Longer

For individuals and organizations alike, the paths there are unsurprising, but all the more effective for it:

1. Make Implicit Knowledge Visible

Very little knowledge in organizations is found in manuals and FAQs. A great deal of what is truly important sits in the heads of employees. In complex environments, not everything someone can do or knows can be explicitly written down. Often however, organizations simply don't like to engage with knowledge management and communication culture. If the criterion for good work is the boss's "I know it when I see it," an AI will have even more trouble than employees already do. If new employees have to spend a day grilling colleagues to find out how a process really works, an AI doesn't stand a chance. And if project updates are shared sometimes in Teams channels, sometimes by phone, and sometimes over lunch, the AI will consistently rely on outdated information.

2. Use the Technology

One of the most important properties of learning processes is that new knowledge can be absorbed more quickly the more knowledge is already there. That means: if I have been regularly using LLMs for my work since 2022 and have read up on the technology behind them, I have a very nuanced sense of how to deploy them best, where their limits lie, and how new models differ. I have automated parts of my tasks, achieved successes along the way, and hit walls in some places. As a result, I can absorb every new feature quickly — the later I start, the more I have to catch up.

3. Train Your People

A guaranteed way to waste money: skimping on learning initiatives. An uncomfortable truth about training programs is that their effect often fizzles out completely. This happens especially when training is understood as a mandatory appointment whose main purpose is to tick a box on a to-do list. Bad training is even worse than no training at all — the effect is the same, but at least no training costs nothing. This point goes far beyond AI but of course applies here too: buying Copilot licenses and pairing them with a one-off introduction to the tool simply isn't enough — yet it's one of the reasons why organizations then wonder about the absent productivity gains.

AI is new, different, and perhaps a notch bigger than previous technological revolutions. But as with every technology, contact with reality is more complicated, more challenging, and above all: less predetermined than initially thought.

The good news is: we ourselves have a say in what that contact looks like and how new technologies change our world — this time is no different.